📌 相关文章

- XOR门

- Tensorflow 中的异或实现

- Tensorflow 中的异或实现(1)

- TensorFlow中神经网络的实现

- TensorFlow中神经网络的实现(1)

- TensorFlow 2.0

- TensorFlow 2.0

- TensorFlow 2.0(1)

- TensorFlow 2.0(1)

- 使用 TensorFlow 实现神经网络(1)

- 使用 TensorFlow 实现神经网络

- Python| TensorFlow logical_xor() 方法(1)

- Python| TensorFlow logical_xor() 方法

- 所有子矩阵的XOR的XOR(1)

- 所有子矩阵的XOR的XOR

- 所有子矩阵的XOR的XOR

- 所有子矩阵的XOR的XOR(1)

- 所有子数组的XOR的XOR查询(1)

- 所有子数组的XOR的XOR查询(1)

- 所有子数组的XOR的XOR查询

- 所有子数组的XOR的XOR查询

- 所有子数组XOR的XOR |套装2(1)

- 所有子数组XOR的XOR |套装2

- 所有子数组XOR的XOR |套装1

- 所有子数组XOR的XOR |套装2(1)

- 所有子数组XOR的XOR |套装1(1)

- 所有子数组XOR的XOR |套装2

- tensorflow - Python (1)

- Tensorflow Bert 实现 - Python (1)

📜 TensorFlow-XOR实现

📅 最后修改于: 2020-12-10 06:06:32 🧑 作者: Mango

在本章中,我们将学习使用TensorFlow的XOR实现。在TensorFlow中开始XOR实施之前,让我们看一下XOR表值。这将有助于我们了解加密和解密过程。

| A | B | A XOR B |

| 0 | 0 | 0 |

| 0 | 1 | 1 |

| 1 | 0 | 1 |

| 1 | 1 | 0 |

XOR密码加密方法基本上用于加密用蛮力方法难以破解的数据,即通过生成与适当密钥匹配的随机加密密钥。

用XOR密码实现的概念是定义一个XOR加密密钥,然后使用该密钥对指定字符串中的字符执行XOR操作,用户尝试对其进行加密。现在我们将重点介绍使用TensorFlow的XOR实现,这在下面提到-

#Declaring necessary modules

import tensorflow as tf

import numpy as np

"""

A simple numpy implementation of a XOR gate to understand the backpropagation

algorithm

"""

x = tf.placeholder(tf.float64,shape = [4,2],name = "x")

#declaring a place holder for input x

y = tf.placeholder(tf.float64,shape = [4,1],name = "y")

#declaring a place holder for desired output y

m = np.shape(x)[0]#number of training examples

n = np.shape(x)[1]#number of features

hidden_s = 2 #number of nodes in the hidden layer

l_r = 1#learning rate initialization

theta1 = tf.cast(tf.Variable(tf.random_normal([3,hidden_s]),name = "theta1"),tf.float64)

theta2 = tf.cast(tf.Variable(tf.random_normal([hidden_s+1,1]),name = "theta2"),tf.float64)

#conducting forward propagation

a1 = tf.concat([np.c_[np.ones(x.shape[0])],x],1)

#the weights of the first layer are multiplied by the input of the first layer

z1 = tf.matmul(a1,theta1)

#the input of the second layer is the output of the first layer, passed through the

activation function and column of biases is added

a2 = tf.concat([np.c_[np.ones(x.shape[0])],tf.sigmoid(z1)],1)

#the input of the second layer is multiplied by the weights

z3 = tf.matmul(a2,theta2)

#the output is passed through the activation function to obtain the final probability

h3 = tf.sigmoid(z3)

cost_func = -tf.reduce_sum(y*tf.log(h3)+(1-y)*tf.log(1-h3),axis = 1)

#built in tensorflow optimizer that conducts gradient descent using specified

learning rate to obtain theta values

optimiser = tf.train.GradientDescentOptimizer(learning_rate = l_r).minimize(cost_func)

#setting required X and Y values to perform XOR operation

X = [[0,0],[0,1],[1,0],[1,1]]

Y = [[0],[1],[1],[0]]

#initializing all variables, creating a session and running a tensorflow session

init = tf.global_variables_initializer()

sess = tf.Session()

sess.run(init)

#running gradient descent for each iteration and printing the hypothesis

obtained using the updated theta values

for i in range(100000):

sess.run(optimiser, feed_dict = {x:X,y:Y})#setting place holder values using feed_dict

if i%100==0:

print("Epoch:",i)

print("Hyp:",sess.run(h3,feed_dict = {x:X,y:Y}))

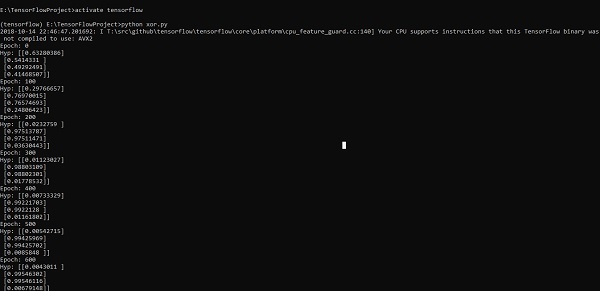

上面的代码行生成输出,如下面的屏幕快照所示-